Hi, my name is Paweł

Twenty years across QA, product, and engineering leadership took me from Warsaw to Cairo. Here's why I started writing about it.

I moved to Egypt about five years ago. Got married here, built a life, found a routine. My friends speak Arabic or English. My wife speaks English. When my Polish parents came to visit us in Dahab, they spoke neither.

For two weeks I was the only language bridge between my parents and everyone else. Every conversation, every restaurant order, every interaction with my friends or my wife, all of it went through me. By the end of the trip I was exhausted.

We tried Google Translate. It didn’t really work. The back-and-forth of typing or waiting for it to process was awkward and slow, and people gave up on it quickly. So I started thinking about what would actually work.

The idea was simple: two people who share no common language sit down, open an app, and just talk. Each person sees what the other is saying, translated into their language, live on their screen.

I went with a PWA because my family is split between Android and iPhone. A progressive web app runs on both, installs with one tap, and opens like a native app. No app store needed, and you can drop a shortcut on your desktop too.

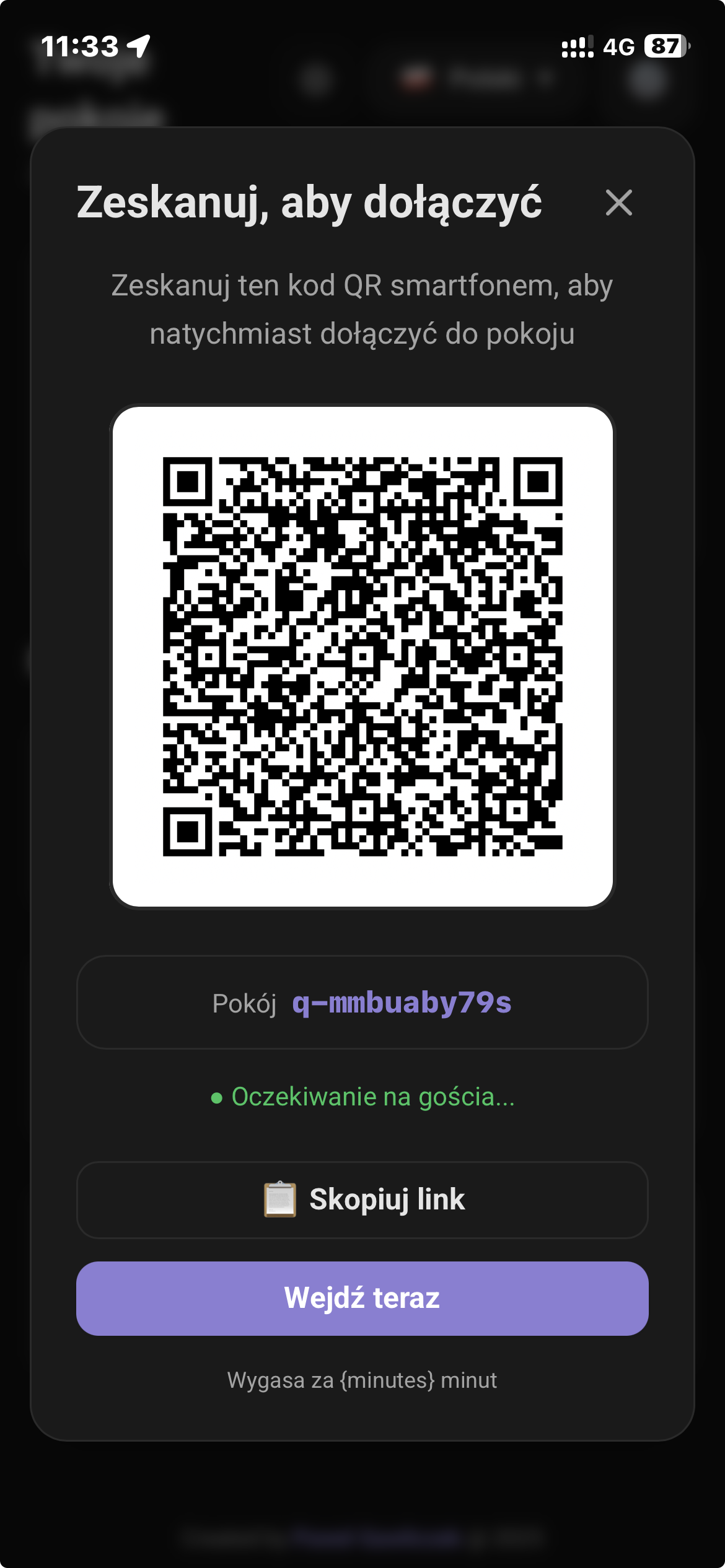

The flow I had in mind: my dad opens the app, hits “quick room,” and a QR code appears.

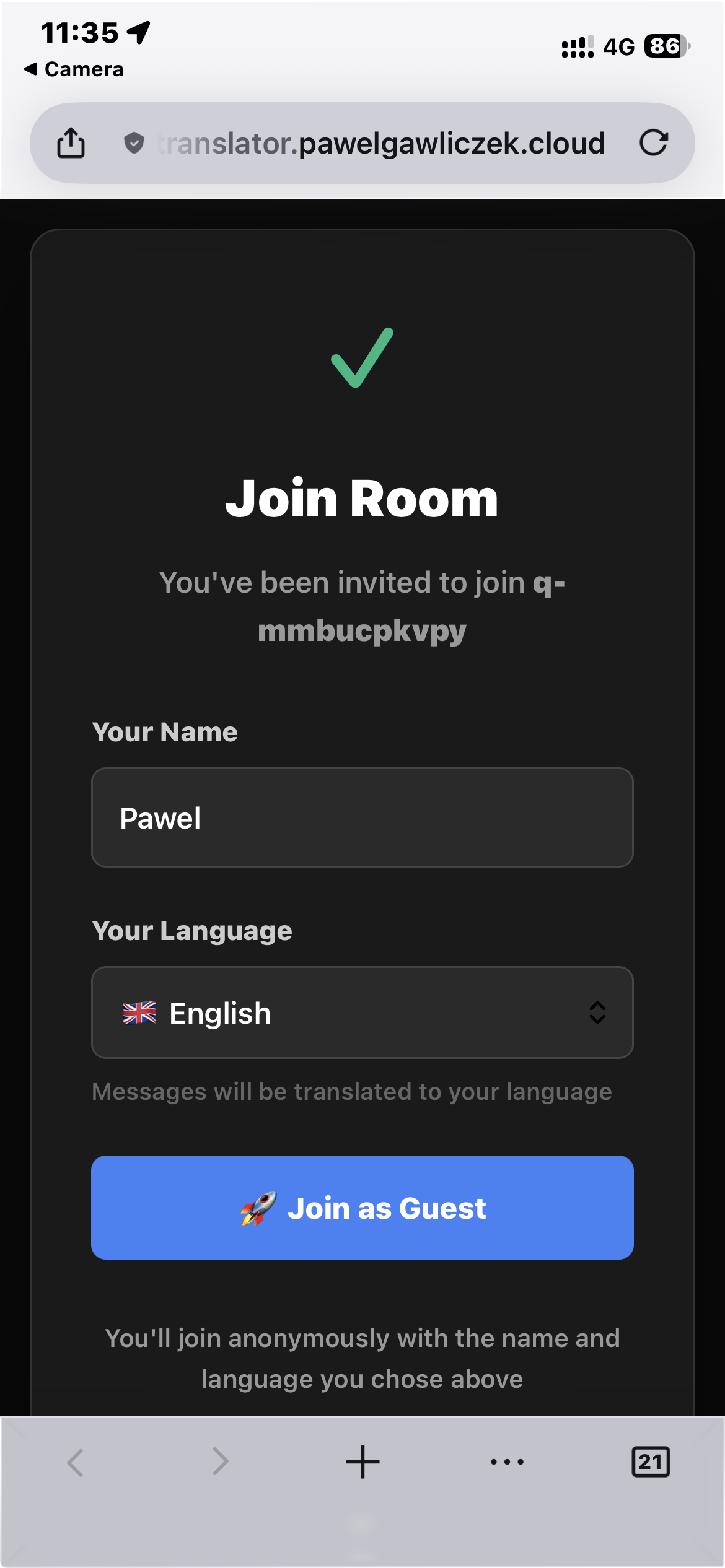

The other person scans it with any phone. A page loads asking them to pick their name and language.

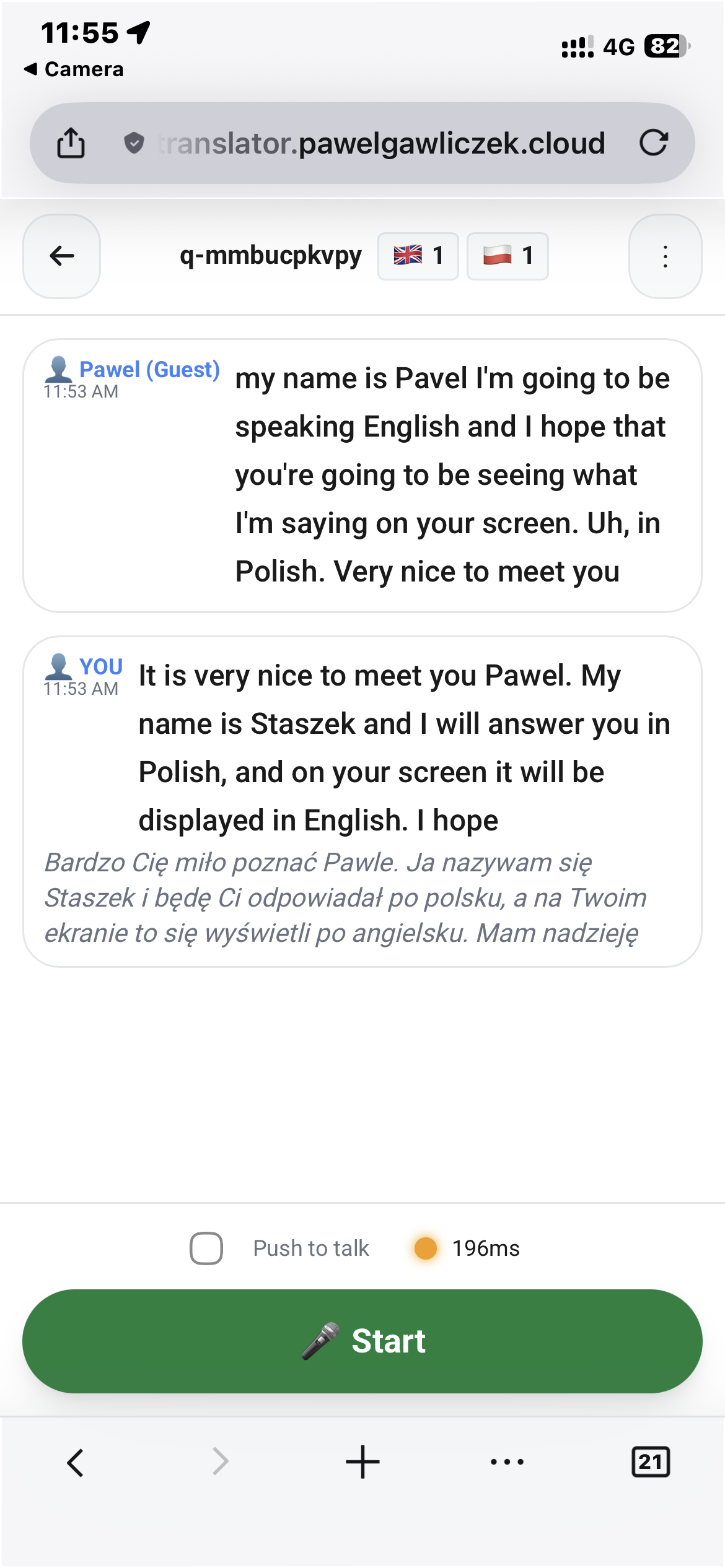

They hit “Join as Guest,” and both people are in the same room. My dad speaks Polish, the other person sees it in their language within a couple of seconds. Here’s the English device, where someone speaking English gets their words translated to Polish for the other participant:

And here’s what it looks like from the Polish side, with someone speaking Polish and seeing English responses:

The app is hosted on a small server. When someone speaks, the audio goes to a speech-to-text engine that converts it to text. That text then gets sent to a machine translation API, and the translated result appears on the other person’s screen.

I tested a bunch of different STT and translation engines. Some were better for certain language pairs than others, so I built routing logic that picks the best provider depending on which two languages are in the conversation. DeepL worked well for Polish-English. GPT-4o-mini handled Arabic better.

A single room supports up to five different nationalities. Everyone sees what everyone else is saying, each in their own language. The whole loop, from speech to translated text on screen, takes under two seconds.

A week after I started building, my dad had a working app. He used it for conversations with people who didn’t speak Polish, which is what I built it for. But then he started pointing the microphone at the TV during English-language ski events and reading Polish subtitles off his phone in real time. I hadn’t thought of that use case at all.

Most of the week went into testing different speech-to-text and translation providers and figuring out which combinations worked best for which language pairs. Getting the latency low enough that conversations felt natural rather than stilted took some iteration.

The PWA decision turned out to be right. No one in my family had to install anything or create an account. The QR code flow meant even my dad, who is not particularly technical, could start a room and get someone else connected in seconds.

Adding new languages was trivial. The routing layer already handled provider selection per language pair, so supporting Spanish or French was just configuration. The hard part was always the speech recognition quality, not the number of languages.

LiveTranslator was my second AI experiment. The first was a group translation bot for WhatsApp. Both solved the same problem: people around me couldn’t talk to each other, and I was tired of being the translator.

What surprised me is how much this got me back into building things. I’d been engineering for years, but something about using AI to solve a problem I personally had, that my family had, woke something up. I wasn’t building for a client brief or a product roadmap. I was building because my dad couldn’t order coffee without me.

I know AI isn’t ready for everything. It hallucinates. It makes mistakes that would be unacceptable in a lot of enterprise contexts. But there’s this personal space, solving your own problems, building tools for the people around you, where the bar is different. It doesn’t need to be bulletproof. It needs to work well enough that my dad uses it instead of tapping me on the shoulder.

I think that space will grow. More people will realize they can build something real in a week. Not because AI writes perfect code, but because it shifts where you spend your time. You think about what the thing should do and whether it’s worth doing, not how to wire up WebSocket connections or parse audio streams. It’s almost a different kind of programming language, one where the conversation is about the problem instead of the plumbing.

That’s what got me hooked. That’s why I kept experimenting.

LiveTranslator is open source. You can check it out on the app page or grab the code from GitHub.

Continue reading

Twenty years across QA, product, and engineering leadership took me from Warsaw to Cairo. Here's why I started writing about it.

My Polish parents and Egyptian in-laws share a WhatsApp group. I got tired of being the human relay, so I built a bot to translate for them.

After three AI projects I realized voice input gets better results than typing. Existing transcription apps wanted $100+/year. So I built my own.