Intonavio

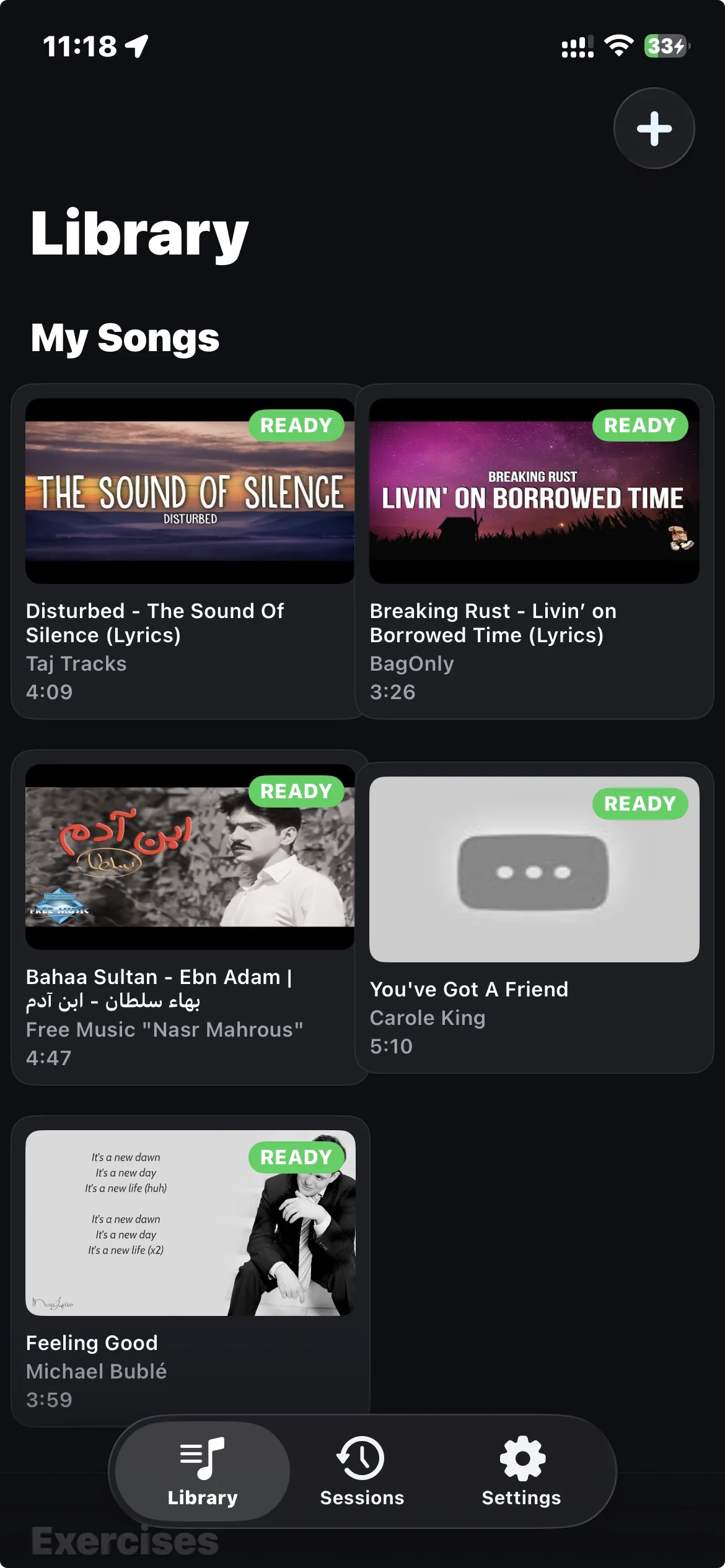

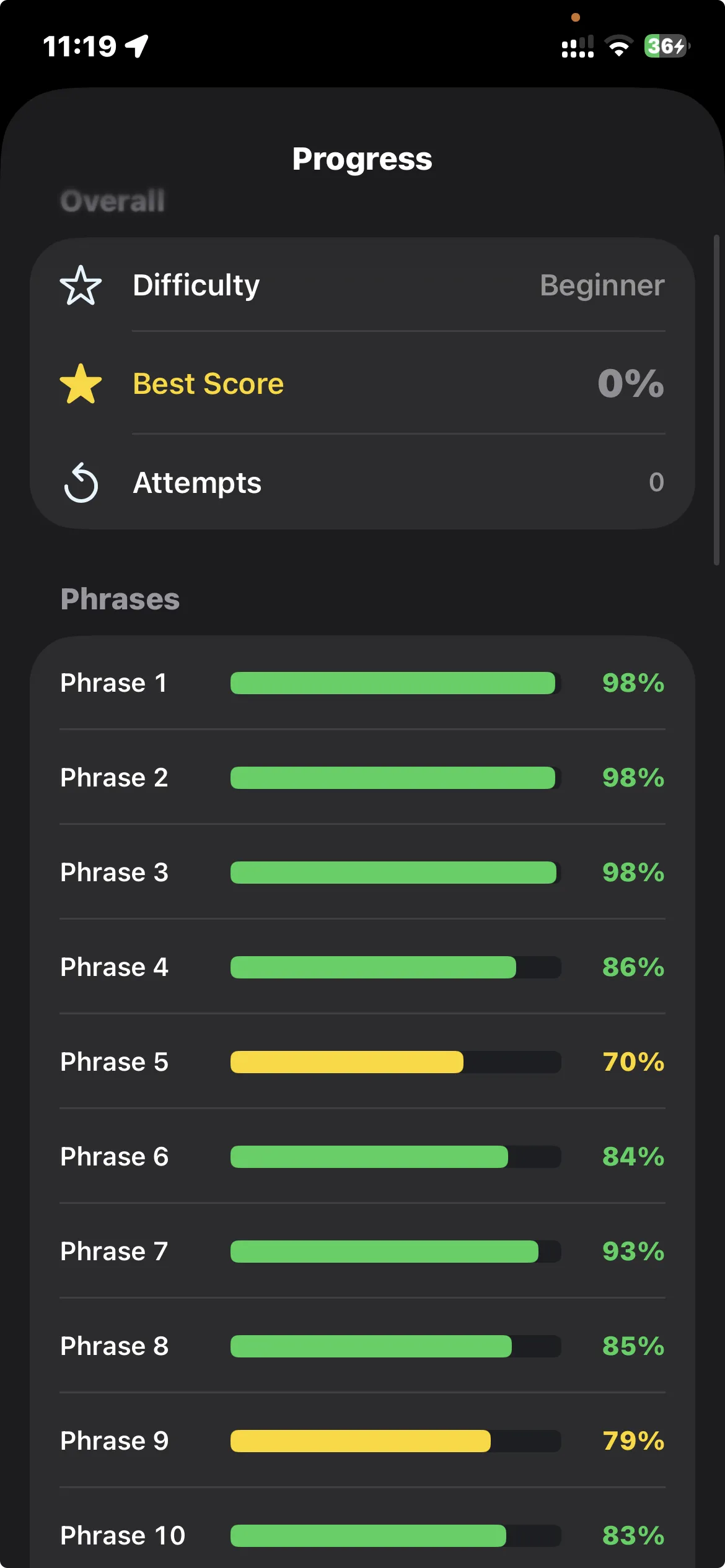

A singing practice app that turns any YouTube song into a pitch trainer. It separates the vocals from the music, plots the singer's pitch on a graph, and shows your voice on top of it while you sing. I built it because practicing at home without a teacher telling you "higher, lower" is mostly guesswork.